RECORDING AVAILABLE

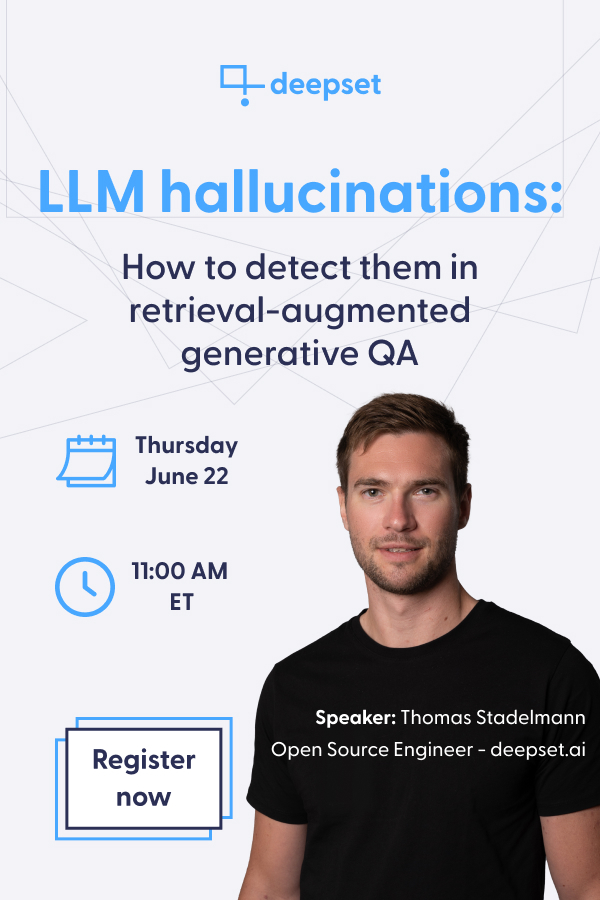

LLM hallucinations

How to detect them in retrieval-augmented generative QA

Hallucinations are one of the biggest challenges in running LLM-based applications as they can significantly undermine the trustworthiness of your application. Retrieval-augmented QA not only enables us to run LLMs on any data but also to mitigate hallucinations. However even with retrieval augmentation we cannot fully avoid them.

In this webinar Thomas will show you current approaches on how to systematically detect hallucinations, paving the way for automating this critical issue.